- L(ai)tte

- Posts

- OpenAI & Google Announcements

OpenAI & Google Announcements

Accessibility, Affordability and Advancements, oh my!

Welcome to the first edition of our weekly AI news, which will be delivered every Friday, so you can keep up to date with the latest in AI.

This week OpenAI and Google brought us two massive announcements that will reshape how we interact with Generative AI capabilities.

OpenAI’s new model is now available (I got access this morning) and Google’s features (more than 1) will be rolled out slowly and randomly over the reminder of the year.

So here is a breakdown of the latest announcements and what they mean for you.

OpenAI Announcements

OpenAI introduces GPT-4o

GPT-4o is a new, free AI model from OpenAI with capabilities similar to GPT-4, but it runs twice as fast. It will be available via browser, on mobile and desktop app. It can handle inputs and outputs in text, audio, and visual formats. However, like other free AI offerings, there will be limitations on usage for free users.

Here are some of the features worth noting;

Multimodal inputs and outputs: Accepts prompts via text, audio, or images and can generate responses in those formats. This seamless multimodal integration unlocks new possibilities like AI-assisted content creation and data analysis across different mediums.

Search Browser access: It can now generate outputs by combining its own knowledge with web searches via Bing, useful for efficient research and information. However, be wary of potential AI hallucinations when using outputted information.

Understand real-time images and vision: It will be able to analyse and understand what you can see, in this case, it’s better for you to see how it works below.

Memory: GPT-4o remembers context from previous conversations, eliminating the need to repeatedly provide background information. This context memory is especially valuable for those juggling multiple projects and clients and working on their own business.

Voice Mode: It accepts natural voice prompts, capturing tone and expression for conversational interactions, like the AI in the movie "Her." OpenAI CEO Sam Altman, shared his use case, saying it allows voice-based multitasking and information retrieval while staying focused on a primary task while working on his laptop.

Accessible: GPT-4o is a new, free model accessible to everyone, but with limitations similar to Claude's free version. There's a cap on the number of messages free users can send, after which the system automatically switches to the older GPT-3.5 model to continue conversations.

Google Introduces the Gemini Era

In a a nutshell Google is in their Gemini Era, and they are integrating their GPT into more of their products, enhancing their capabilities.

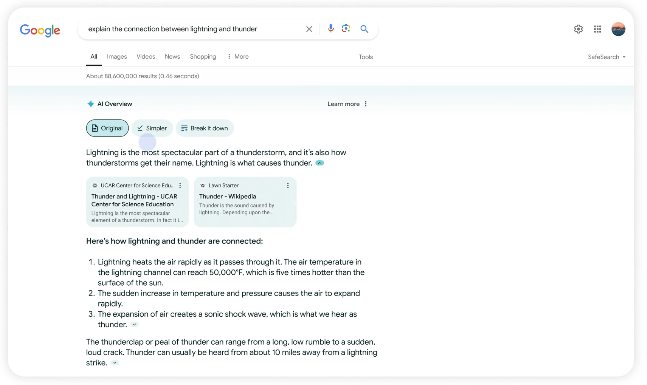

Search (AI Overview): It will be able to summarise results quickly from multiple sources, allowing editing responses, and enables multi-step reasoning e.g. Add into the search bar; Find the best yoga or pilates studios in Boston and show details on their intro offers and walking time from Beacon Hill

Credit: Google

Project Astra: an AI assistant that can process multimodal information (text, visions and audio) and understand the context you’re in, responding naturally in conversation.

Gemini Workspace Integration: Next month, Gemini will integrate with Google Workspace apps like Gmail and Docs for paid subscribers. It offers a new side panel to chat with Gemini, summarise, analyse, and generate content using insights from your emails/documents without leaving the app. This AI assistance can streamline workflows and boost productivity for those relying on Google Workspace.

Ask Photos: A new way to search for photos using Gemini, where you can ask questions and get helpful answers such as remind me of all the networking events I have been to, saving you time in an effort to locate images or further organise them.

Credit: Google

AI Teammate: Gemini can act as a customisable AI co-worker named and instructed to perform specific roles and complete objectives. It can join team chats, understand context, and respond to queries based on prior discussions. This virtual AI collaborator could be transformative by assisting with contextual tasks and contributions.

Labs.google: Introducing Veo & Imagen 3, taking on OpenAI’s Sora and DALL-E

Veo will be able to generate high-quality, 1080p resolution videos that can go beyond a minute

Imagen 3 will be their highest quality text-to-image model, capable of generating images with even better detail, richer lighting and fewer distracting artifacts than our previous models.

There you have it a simple explanation of the two biggest announcements this week.

Of all the features, I am most intrigued by how Search AI Overview will impact SEO optimisation and the experience of searching for results in a new and quick way.

P.s Reply and let me know which feature you’re most excited about!

Talk soon, Jessica

Reply